New rules for US national security agencies balance AI’s promise with need to protect against risks

WASHINGTON (AP) — New rules from the White House on the use of artificial intelligence by U.S. national security and spy agencies aim to balance the technology’s immense promise with the need to protect against its risks.

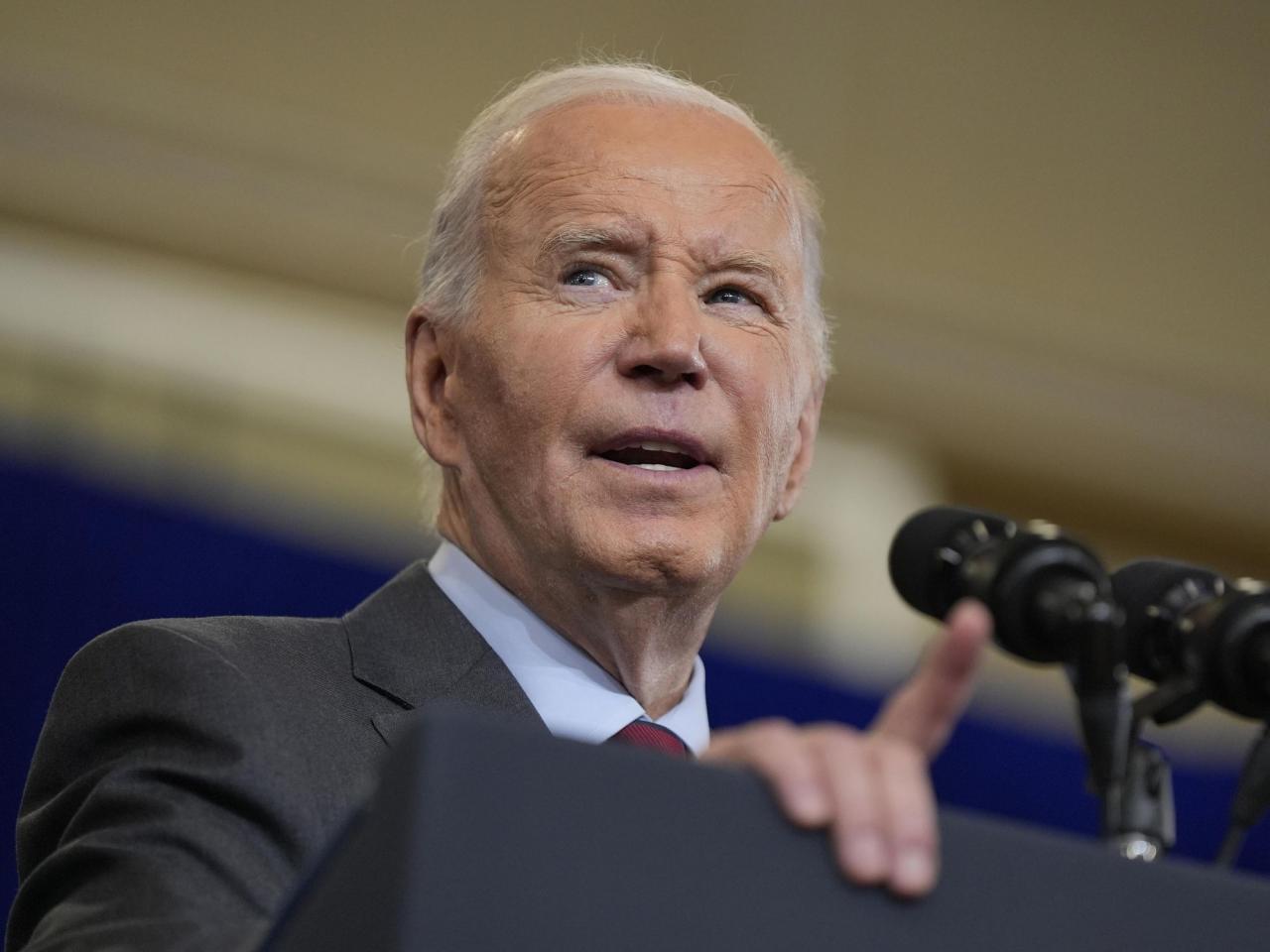

The framework signed by President Joe Biden and announced Thursday is designed to ensure that national security agencies can access the latest and most powerful AI while also mitigating its misuse.

Recent advances in artificial intelligence have been hailed as potentially transformative for a long list of industries and sectors, including military, national security and intelligence. But there are risks to the technology’s use by government, including possibilities it could be harnessed for mass surveillance, cyberattacks or even lethal autonomous devices.

“This is our nation’s first-ever strategy for harnessing the power and managing the risks of AI to advance our national security,” national security adviser Jake Sullivan said as he described the new policy to students during an appearance at the National Defense University in Washington.

The framework directs national security agencies to expand their use of the most advanced AI systems while also prohibiting certain uses, such as applications that would violate constitutionally protected civil rights or any system that would automate the deployment of nuclear weapons.

Other provisions encourage AI research and call for improved security of the nation’s computer chip supply chain. The rules also direct intelligence agencies to prioritize work to protect the American industry from foreign espionage campaigns.

Civil rights groups have closely watched the government’s increasing use of AI and expressed concern that the technology could easily be abused.

The American Civil Liberties Union said Thursday the government was giving too much discretion to national security agencies, which would be allowed to “police themselves.”

“Despite acknowledging the considerable risks of AI, this policy does not go nearly far enough to protect us from dangerous and unaccountable AI systems,” Patrick Toomey, deputy director of ACLU’s National Security Project, said in a statement. “If developing national security AI systems is an urgent priority for the country, then adopting critical rights and privacy safeguards is just as urgent.”

The guidelines were created following an ambitious executive order signed by Biden last year that called on federal agencies to create policies for how AI could be used.

Officials said the rules are needed not only to ensure that AI is used responsibly but also to encourage the development of new AI systems and see that the U.S. keeps up with China and other rivals also working to harness the technology’s power.

Sullivan said AI is different from past innovations that were largely developed by the government: space exploration, the internet and nuclear weapons and technology. Instead, the development of AI systems has been led by the private sector.

Now, he said, it is “poised to transform our national security landscape.”

Several AI industry figures contacted by The Associated Press praised the new policy, calling it an essential step in ensuring America does not yield a competitive edge to other nations.

Chris Hatter, chief information security officer at Qwiet.ai, a tech company that uses AI to scan for weaknesses in computer code, said he thought the policy should attract bipartisan support.

Without a policy in place, the U.S. might fall behind on the “most consequential technology shift of our time.”

“The potential is massive,” Hatter said. “In military operations, we’ll see autonomous weaponry — like the AI-powered F-16 and drones — and decision support systems augmenting human intelligence.”

AI is already reshaping how national security agencies manage logistics and planning, improve cyber defenses and analyze intelligence, Sullivan said. Other applications may emerge as the technology develops, he said.

Lethal autonomous drones, which are capable of taking out an enemy at their own discretion, remain a key concern about the military use of AI. Last year, the U.S. issued a declaration calling for international cooperation on setting standards for autonomous drones.

Source: wral.com